Today at CWTS we are releasing the 2016 edition of our Leiden Ranking. The CWTS Leiden Ranking is a web tool that provides a suite of bibliometric statistics for a large number of research-intensive universities worldwide. We try to design and construct our ranking in accordance with the most recent insights in the field of bibliometrics and scientometrics. New editions of the ranking therefore usually include improvements compared with earlier editions, for instance by refining the methodology, improving the presentation of the statistics, or increasing the number of universities included in the ranking.

Today at CWTS we are releasing the 2016 edition of our Leiden Ranking. The CWTS Leiden Ranking is a web tool that provides a suite of bibliometric statistics for a large number of research-intensive universities worldwide. We try to design and construct our ranking in accordance with the most recent insights in the field of bibliometrics and scientometrics. New editions of the ranking therefore usually include improvements compared with earlier editions, for instance by refining the methodology, improving the presentation of the statistics, or increasing the number of universities included in the ranking.

In the 2016 edition of our ranking, the main improvement lies in the presentation of the bibliometric statistics. We emphasize a multidimensional perspective on the statistics and focus less on the traditional unidimensional notion of ranking universities. The approach that we have adopted is reflected by the motto of the Leiden Ranking 2016: Moving beyond just ranking.

The traditional approach to university ranking

A traditional ‘league table’ university ranking offers a list of universities ordered by a certain indicator, such as the percentage of highly cited publications produced by a university. The university at the top of the list is at rank 1, the next university in the list is at rank 2, and so on. When such a list-based presentation is used, the performance of a university is typically interpreted in terms of its rank relative to other universities. The performance of a university may for instance be summarized by observing that the university is at rank 250 worldwide and at rank 5 within its own country. Likewise, it may be claimed that the performance of a university has improved because its rank has improved relative to the previous edition of a ranking.

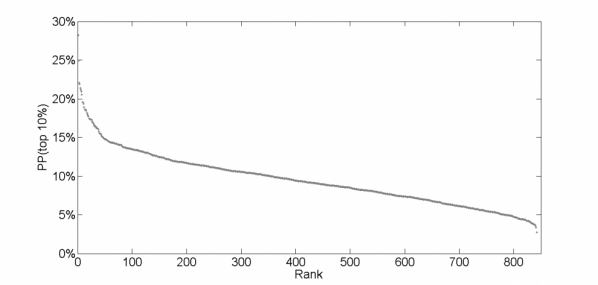

However, interpreting a university ranking exclusively in terms of the ranks of universities imposes some serious problems of interpretation. One problem is that in some cases a quite large difference in ranks corresponds with a very minor difference in the indicator from which the ranks have been obtained. For instance, if we rank the universities in the 2016 edition of the Leiden Ranking based on their percentage of highly cited publications (where a publication is considered highly cited if it belongs to the top 10% most cited publications in its field), we find that the universities at ranks 400 and 425 have only a minor difference in their percentage of highly cited publications. The university at rank 400 has 9.4% highly cited publications, while for the university at rank 425 the percentage of highly cited publications is just slightly lower (9.2%). The figure below shows that for many universities in the Leiden Ranking there are a significant number of other universities with a comparable percentage of highly cited publications. Hence, it is doubtful whether a difference of for instance 25 ranking positions has any practical significance and, for that matter, whether a university at for instance rank 400 can really claim to outperform a university at rank 425.

We now turn to a second problem. Interpreting a university ranking exclusively in terms of the ranks of universities places a lot of emphasis on a single indicator, making the interpretation strongly sensitive to peculiarities of this indicator and to special characteristics of a university. This may lead to strange outcomes and confusion. Suppose for instance that we are interested in the scientific performance of universities in the field of physics. When universities are ranked according to their percentage of highly cited publications in the field of physics, a university without a physics department may actually be ranked first. This seems strange and hypothetical, but this situation might actually occur when a university has produced just a few physics publications and these publications happen to be highly cited. Just think of an engineering university where the engineering departments occasionally produce a physics publication. However, when in addition to the university’s percentage of highly cited publications also its absolute number of highly cited publications in the field of physics is taken into account, it would immediately become clear that the university is actually a very minor contributor to the field of physics. This illustrates how confusion may arise when too much emphasis is put on a single indicator. From this point of view, the traditional single-indicator focus of many university rankings is problematic. Obtaining a more balanced and deeper understanding of the performance of universities requires multiple indicators to be taken into account together.

Moving beyond just ranking

As already mentioned, the guiding rationale for implementing changes in the 2016 edition of the CWTS Leiden Ranking is reflected by the Moving beyond just ranking motto. Compared with the 2015 edition, the new edition offers essentially the same information, but the presentation of this information on our website has been significantly revised. In addition to a traditional list-based presentation (list view), the website now also offers a chart-based presentation (chart view) as well as a map-based presentation (map view). The chart view enables comparing the performance of universities using two indicators simultaneously. Moreover, this view emphasizes the actual values of the indicators rather than the ranks implied by these values. The map view facilitates easy comparisons between universities that are geographically close to each other.

The traditional list view has also been revised. Based on feedback from our user community, we have learned that the distinction between size-dependent and size-independent indicators sometimes causes problems. An example of a size-dependent indicator is the number of highly cited publications of a university. The corresponding size-independent indicator is a university’s percentage of highly cited publications. In the case of a size-dependent indicator, larger universities will typically have higher values than smaller universities. Size-independent indicators correct for the size of the publication output of a university and are therefore often used to compare universities that differ in research volume. In the 2016 edition of the Leiden Ranking, size-dependent and size-independent indicators are always reported together in the list view. This emphasizes that both types of indicators need to be taken into account to obtain a good understanding of the scientific performance of universities.

Furthermore, unlike previous editions of our ranking, the 2016 edition no longer uses the percentage of highly cited publications as the default indicator for ranking universities. In the new edition, by default universities are ranked simply based on the size of their publication output. If users would like to rank universities based on their highly cited publications, they need to explicitly choose whether they are interested in a size-dependent or a size-independent ranking, so a ranking based on the number or the percentage of highly cited publications. We expect that this will induce users to reflect more carefully on the choice between the two types of rankings.

User feedback

We hope you will appreciate the changes we have made in the new edition of the CWTS Leiden Ranking. As always, we very much appreciate your feedback, which is essential for future improvements of the ranking. Suggestions and comments can be provided by responding to this blog post or by contacting us directly using this contact form. We are looking forward to hearing from you!