In the face of the limitations, misuses and abuses of conventional bibliometric-based evaluation, we have recently seen an increasing demand for new type indicators – asking, for example, for indicators of societal impact, open science or RRI (responsible research and innovation). Will ‘better’ and ‘faster’ indicators solve the current controversies on evaluation?

In this new paper, based on my keynote presentation at the 2017 S&T Indicators Conference in Paris (see video recording), I argue that adding only more indicators is misguided as a way to improve quantitative evaluation if the goal is to increase the social value of research.

Having more indicators can indeed be helpful when they represent valuable research activities previously not captured by conventional indicators. However, the deeper problems with current quantitative evaluation do not lie in the indicators themselves. The problems lie in their role and place in STI governance. Their role as a justification of efficiency rather than of genuine research missions such as curiosity or social wellbeing. Their place far from the contexts of application, out of touch with knowledge users.

Indicators for pluralising research evaluation

Adopting an agenda for the democratisation of research and innovation, I propose a framework for re-positioning STI indicators in policy in such a way that they help reconnect decision making with researchers and societal stakeholders.

The argument is that scientometrics has been framed in technical terms, paying scant attention to its context of use. Research policy takes place under conditions of uncertainty and lack of value consensus, and in these circumstances knowledge formation and decision making are inevitably entangled (Pielke, 2007). Through contextualisation and participation, indicators can take into account more diverse assumptions and values, thus making decision taking more sensitive to local uncertainties and values (Stirling et al., 2007). Pluralisation of perspectives enriches evaluation.

A research agenda based on contextualisation and participation

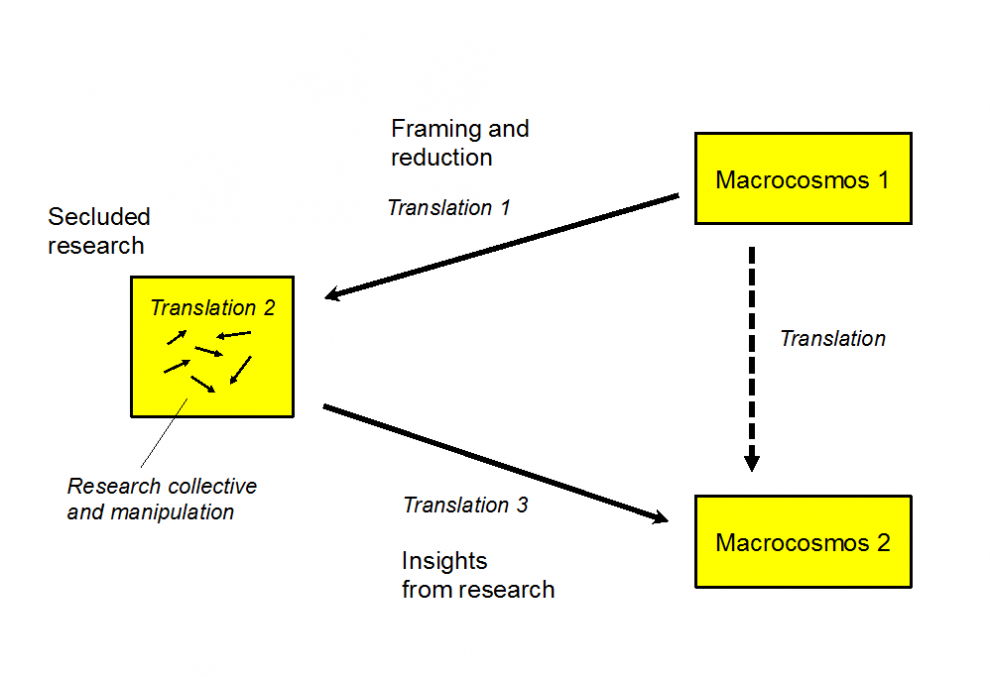

To think about how to contextualise indicators, let us build on a model of research by Callon, Lascoumes and Barthe (2009) based on the notion of translation. As outlined in Figure 1, the process of translation of the unintelligible world to an understandable world involves three stages of translation:

The first is that of the reduction of the big world (the macrocosm) to the small world (the microcosm) of the laboratory. The second stage [secluded research] is that of the formation and setting to work of a restricted research group that, relying on a strong concentration of instruments and abilities, devises and explores simplified objects. The third stage is that of the always perilous return to the big world: Will the knowledge and machines produced in the confined space of the laboratory be able to survive and live in this world? (p. 148)

In my view, most scientometrics nowadays fits with the notion of secluded research (in translation 2), i.e. a research field using a highly reduced description of the macroscosm (in our case, the STI systems) in terms of a limited data on publications, patents, spin-off counts, and related statistics.

As an alternative, I propose that design and use of STI indicators should take place not only in ‘secluded spaces’ such a scientometric laboratories, but with the participation of stakeholders so as to take in consideration their contexts. ‘Indicators in the wild’ would be the metaphor for this contextualised and participatory work of constructing quantitative evidence for decision making.

Callon’s three stages of translation. Based on Callon, Lascoumes and Barther (2009, p. 69).

In practical terms, this means three lines of action:

-

To continue ongoing efforts to expand the data sources, processing and visualisation techniques used in scientometrics, and to broaden the research communities (particularly those in qualitative modes) involved in developing scientometrics (in translation 2).

-

To engage with a more varied set of stakeholders and develop forms of quantitative evidence that facilitate their contextualisation through participation, allowing for plural and conditional advice (in translation 3).

-

To open up analytical framings, so as to foster a more balanced attention to diverse STI options –particularly in STI policies aiming at societal goals (in translation 1).

Towards mixed-methods in S&T indicators

For this transformation towards contextual indicators to occur, scholarship in STI indicators needs to be more engaged with other research fields. This does not mean rejecting the focus on statistical analysis, but rather to complement it with qualitative methods that can contribute to mixed approaches and new quantitative methods that facilitate the scrutiny of data analyses by stakeholders.

I am aware that embracing participatory approaches and contextual tinkering of data goes against the rhetoric of big data, and the increasing standardisation of scientometric practices. Thus, we have two opposing epochal shifts. On the one hand, a shift in the political economy of research with pressures from infrastructure developers and commercial companies towards further managerialism towards ‘data-driven evaluation platforms’. On the other hand, the realisation by influential stakeholders (e.g. signers of DORA) that indicators cannot be simply expanded, but that their role in research governance needs to move towards sensitivity to epistemic diversity, pluralism and social robustness.

If research genuinely aims to contribute to human wellbeing, it is time to listen to the call for democratising science with new approaches to STI indicators – beyond narrow-minded databases, out in the wild.