Academic evaluation regimes set up to quantify the quality of research, scholars and institutions have been widely criticized because of the detrimental effects they have on academic environments as well as on knowledge production itself. The evaluative inquiry is a recent prompt for a more exploratory, less standardized way of doing academic evaluations. At CWTS in Leiden, we have experimented with this prompt in the context of two commissioned projects, advising a theological department and university on the self-assessment element of the Dutch evaluation protocol. With these projects in mind I propose four moves to give further shape to the evaluative inquiry’s method (how), focal point (what), subject (who) and ambition (to what end). Versatile methods steer us away from our obsession with representation and throw us into a reconsideration of the where and how of academic values. A contextual focus changes the academic preoccupation with the excellent individual to the building of common worlds. In knowledge diplomacy the analyst relinquishes her desires for standardization and compatibility for the generative navigation of incongruent knowledge systems. And, lastly, in accountable conversations, accountability is built in ongoing engagements rather than performed in a single evaluation report.

Versatile methods: from representation to reconsideration

Metrics play an important role in the representation and communication of academic excellence. There are many initiatives that problematize this role (among others, DORA and Leiden Manifesto) arguing that metrics and citation scores alone aren’t appropriate instruments to represent academic excellence. With the evaluative inquiry we build on these initiatives without endorsing the, in our view, unproductive dichotomy between quantitative and qualitative ways of evaluating research. Instead, we propose versatile methods allowing ourselves to pick from a range of methods such as interviews, workshops, and contextual response analyses. Versatile methods do not just help us know scientific environments in additional ways. More importantly, they offer a range of impulses that compel project partners as well as analysts to rethink established ways of evaluating academic quality.

For one of the projects we decided on a combination of interviews and the less familiar method of a design thinking workshop. As participants would be engaged in unusual ways, the workshop seemed a perfect tool to get them to think outside the box and gain additional insights in the workings of the department. Not all theologians valued the workshop as a meaningful exercise, however. When we started to build their theological ecosystems with pipe cleaners, paperclips and Lego figurines, one theologian frowned and walked away. We didn’t immediately know either what to make of our experimental methodology and what epistemological status to grant to a full day’s worth of crafty material and playfully invented fables and rituals. This disconcertment compelled us to reconsider some of the entrenched assumptions about the what, where, how and who of academic quality. These conversations offer a mode of inquiry that is radically different from established evaluative protocols – quantified or not – as these conversations do not start from institutionalized parameters.

Theological ecosystem with Lego figurines created during the workshop

Contextual focus: from individuals to building common worlds

Academic evaluations are typically construed around the idea that academic quality is a value that belongs to an individual unit (a scholar, department or institution). Adding societal relevance to the ledger of academic accounting hasn’t moved the focus away from individual excellence: publications aren’t enough to demonstrate excellence, one now has to prove societal relevance as well. This focus on the individual renders academic organization and society only interesting and valuable to the extent that they produce individual excellence. The evaluative inquiry moves away from the individual and, instead, takes as its focal point the tangled feedback relations between academic themes and different academic and social contexts. Contextual focus, I propose, is the element of the evaluative inquiry that detects these vibrant relations and moves them center stage.

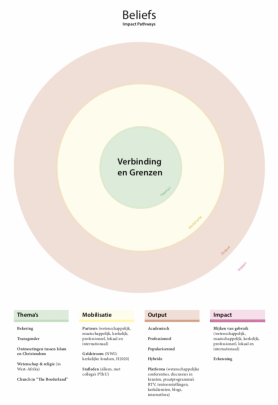

An example is a disagreement within one of the theological departments about what good science looks like. This disagreement was grounded in differing epistemic commitments coinciding with denominational loyalties, debating for example the nature of evidence for and against theism. One could say this struggle was contextual noise hindering the flourishing of academic units. Instead, we suggested to focus on the conflict as a lively debate on how to collaborate across spiritualities. Participants in this debate could then be considered experts of multi-faith collaboration who could exchange knowledge with societal domains that grapple with similar issues, such as multicultural education or refugees’ integration in Dutch society. Individual excellence is decentralized, here, in favor of an appreciation of the dense and lively interaction between academic themes and their multiple contexts.

Workshop poster used to discuss the tangled relations between themes and their contexts.

Knowledge diplomacy: from standardization to mediation

One of the critiques of academic evaluation is that it has become a ritual of verification that has turned what is extremely political into mundane bureaucratic and technical matters to be dealt with by experts as mere technicalities. The project with the Catholic theologians made us rethink our own roles. Catholic theologians are guided by the notion of re-actualization, which is an age-old exercise of re-articulating the meaning and form of Christianity in the face of adversity. Re-actualization struck us as a kind of self-evaluation, which made clear to us that evaluators are not the only experts of value. These insights may enrich the repertoire of the evaluator and offer lessons that may be drawn on in subsequent encounters. What was more, however: the temporal scope and reference to adversity within the idea of re-actualization served as a reminder of the stakes of the evaluation project.

The figure of the diplomat allows us to take the evaluation project seriously as both technical and political, as both furthering our understanding of different knowledge traditions and working towards making the encounter work for all parties involved. Rather than commanding compatibility with a single register of values, as the bureaucrat does, the diplomat negotiates ways forward together, despite incongruence of worldviews or ambitions.

Accountable conversations: from reports to ongoing engagement

Academic evaluations typically have a clear sense of boundaries, cause and effect, beginnings and ends: following methodological protocol yields representative results, evaluators examine the evaluated subject, and opening up the question of academic quality is closed with a (bibliometric) report. While we acknowledge the academic work on what goes into keeping boundaries and data stable and clean, the evaluative inquiry wants to engage with the uncertainty of evaluation work and the politics of formats, protocols and endings.

At a recent conference Sarah de Rijcke presented our work around the evaluative inquiry and was asked about how we envisioned this approach could work within the hierarchies and power struggles of current academia and science policy. One answer could be that even without being able to offer a definitive analysis, we firmly believe in an ongoing discussion of the fault lines between forms of value, the uncertainties embedded within academic evaluations — from precarious academic positions to the scaffolding that is needed to turn uncertain scientific claims into certain ones — and the politics of choices and cuts that are made. While strongly advocating rigorous analytical work in the field of academic evaluations, the evaluative inquiry is equally about the conversation with academics, policy-makers, and others interested in academic evaluation.

Looking forward

CWTS will continue the experiment of academic evaluations. These four moves around the how, what, who and why of the evaluative inquiry will hopefully turn what might otherwise be considered liabilities in evaluation into sources of inspiration.